While making some serious progress on texturing scanned surfaces, we ran into need of a more decent surface reconstruction and decimation technique. Until now, exported meshes contained hundreds of thousands of triangles, adding unnecessary overhead in regions that could be expressed with just a couple of triangles (e.g planar regions). Additionally, we felt the need of closing small surface holes in order to allow smooth texturing across the surface.

Therefore we re-designed our surface reconstruction pipeline to support more sophisticated reconstruction techniques and a configurable surface decimation pipeline.

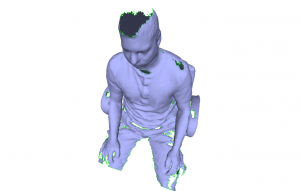

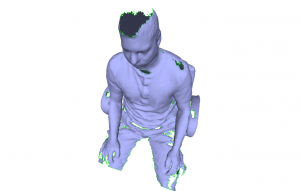

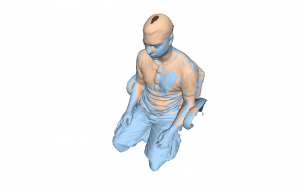

Below is an image that shows the original mesh as generated by the current version of ReMe (v. 0.6.0-405). It contains roughly 250.000 faces and one can clearly spot the holes that remained due to the lack of visibility of these areas while scanning.

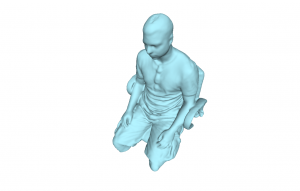

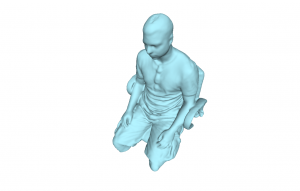

In contrast, the next image shows a successful reconstruction of the original surface reduced to 50.000 faces with boundary holes closed.

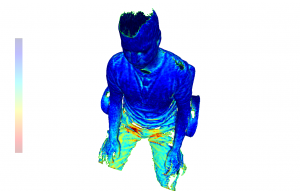

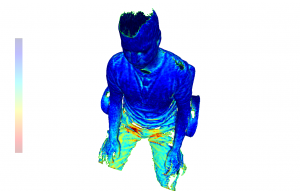

Comparing both meshes using the Hausdorff distance gives an average distance of 0.8 mm. The image below colorizes the distances (blue low, red high).

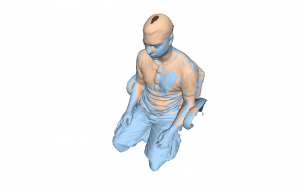

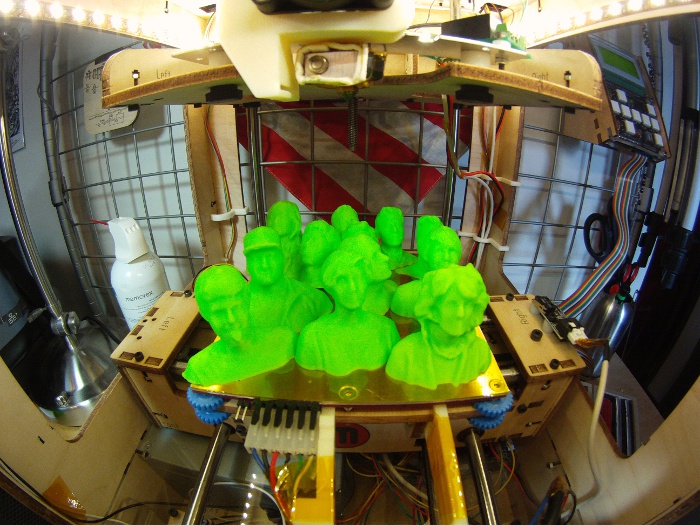

Surface reconstruction isn’t limited to individual meshes, but can also be used to fusion multiple volumes into one single consistent mesh. The image below shows two individual stitched meshes using ReMe’s --multiscan feature.

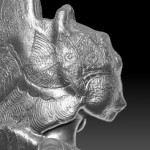

Here is the fusioned mesh as generated by the development version of ReMe

Stay tuned!