Today, it’s our pleasure to announce completely new scanning strategy that improves hand-held scanning in many ways. Although we are in a prototypical stage, we wanted to share the lasted achievements with our readers.

Limitations of hand-held scanning

One of the major issues with hand-held scanning is the fact that the set of tolerated scan motions does not match your natural sequence of movements. This means that you are often constrained to move slower than intended, plus you have make sure that the scanner points at areas of interest and keeps a certain distance to the object being scanned. Violation of any of these contraints leads to ‘tracking lost’ and corrupted data scenarios. We’ve seen unexperienced users being frustrated by these implicit scanning assumptions more than once. Moreover, this frustration quickly turned into to refusal of the 3D scanning technology all together.

Improving usability

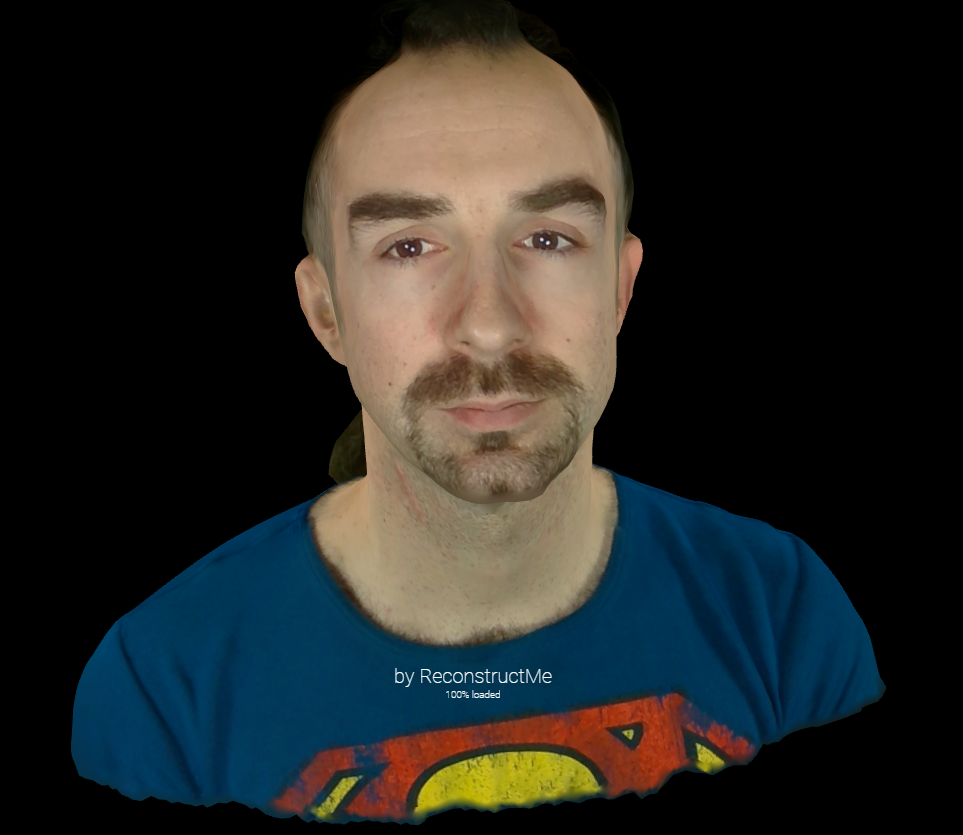

So, we thought about ways to improve the usability of the system and came up with the following. In the video linked below you can see a new low-cost 3D scanning device that does not lose track no matter how jerky the movements are.

Features at a glance

Robustness

The new system is robust to any kind of jerky movements. Move naturally and never lose track again. In case you put the scanner aside for a pause, you can immediately pick up scanning from any location within the scanning area.

High accuracy

The system offers a constant error behaviour across the scanning area. Accumulation of errors due to drift is suppressed. The tracking accuracy is mostly independent of surface material and geometric structure of the scene.

Low-cost components

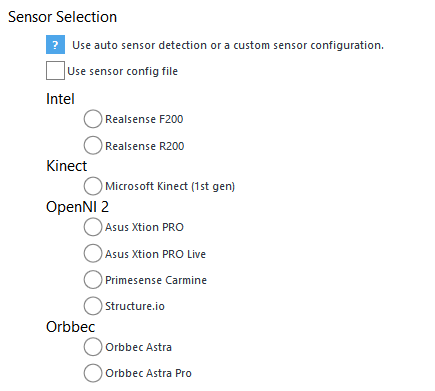

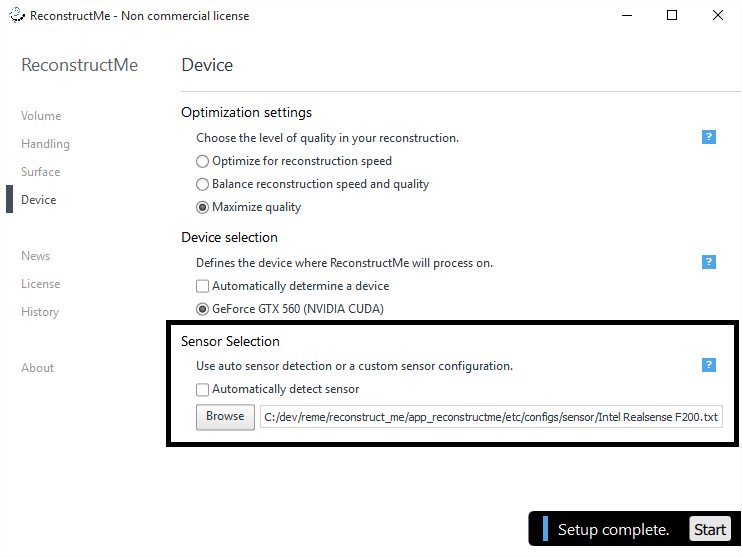

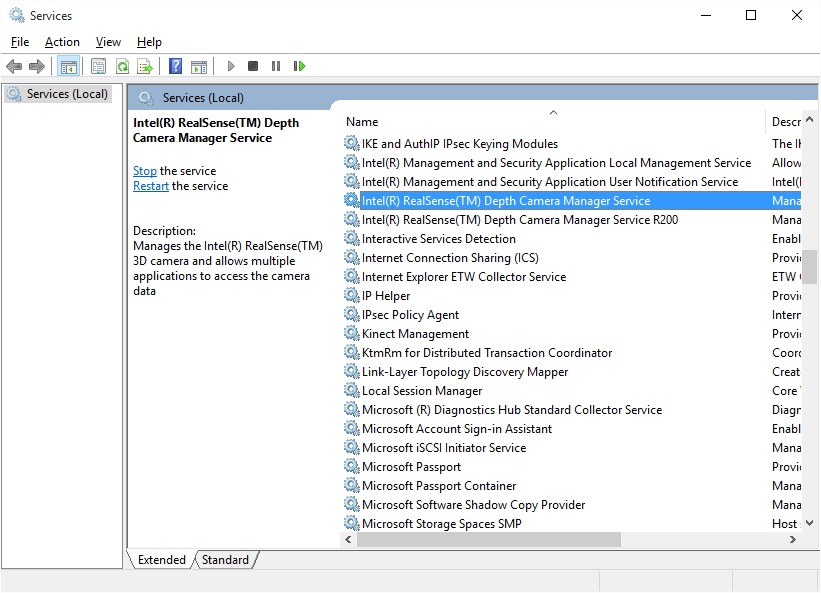

We’ve put strong efforts into cutting costs by using commodity hardware components.

Scale

The supported scanning area is flexible – from desktop up to areas that easily fit an entire car.

We plan to release more material soon. We hope we’ve raised your interest. Stay tuned!